Software testing teams use numerous types of manual testing. Each method focuses on a specific question and serves a unique purpose, so using a single form of manual testing on a project cannot be thorough enough. Choosing the right combination of manual testing types to use on each job helps testers to verify every part of the product under test, which is the key to launching high-quality products.

In a world of high-tech automation and robots, manual testing remains valuable and relevant to software teams. Automation does not replicate the minds of humans, and since humans are the ones using the software, manual testing is a necessary part of building software that users love to use.

In this article, we’re going to look at some of the more widely used types of manual testing. We will also discuss who typically runs these types of tests, the context when each type of manual testing is used, and share examples of the different types of manual testing.

Different Types of Manual Testing

Smoke Testing

Smoke testing is a high-level type of manual testing used to assess whether the software conforms to its primary objectives without critical defects. Smoke testing is a non-exhaustive approach because it is limited to verifying only the core functionality of the software.

Smoke testing is often used to verify a build once a new functionality has been introduced in a piece of software. The QA team typically determines which parts of the software need to be assessed before running multiple smoke tests in a suite. Smoke tests are a preliminary type of testing that is run ahead of more critical, in-depth testing.

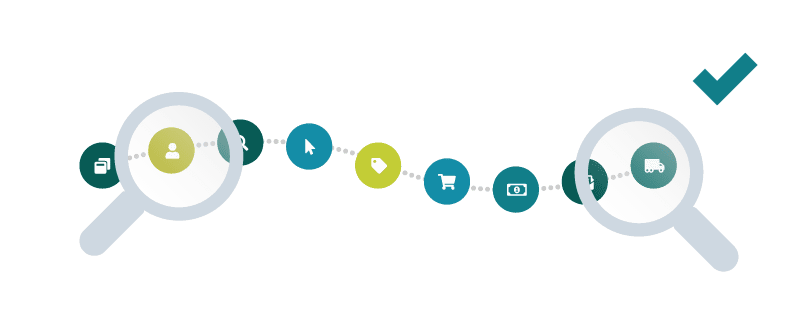

Example: Used for testing a new feature, such as the ability to add multiple items to a shopping cart on an e-commerce site.

Cross Browser Testing

There is no guarantee that a website will look identical on every browser because each browser may respond differently and render the webpage according to its own interpretation. These variables make it super important that cross browser testing is performed before a website is brought to production. Cross browser testing is conducted to ensure a consistent experience across all browsers.

Browser testing checks the functionality, design, accessibility, and responsiveness of an application. Beginning cross browser testing towards the end of the development cycle is preferable, so most, if not all, core functionality can be assessed for how they render across multiple web browsers. Cross browser testing is usually conducted by the QA team and/or designers. Since the design team is intimately familiar with every pixel, it can be beneficial to have them involved.

Example: Testing that the UI responds appropriately across all browsers.

Acceptance Testing

Finding bugs is the focus of most types of manual testing, but acceptance testing is different. The purpose of acceptance testing is to reveal how closely the application conforms to the user’s needs and expectations and is often referred to as User Acceptance Testing or UAT for short.

Acceptance testing is performed once all bugs have been addressed. The product should be market-ready during acceptance testing because this type of testing is designed to give the user a clear view of how the software application will look and act like in real life. Acceptance testing should be done by the client or an actual user of the product. It is one of the most important types of testing because it is performed after development and bug fixes, as the last testing process before going into production.

Example: Testing the end-to-end flow of a piece of software. For example, a real estate application that allows users to upload photos and create real estate listings – acceptance testing should verify this can be done.

Beta Testing

Beta testing is a common practice for obtaining feedback from real users during a soft launch before the product is made available to the general public. It allows software teams to gain valuable insights from a broad range of users through real-world use cases of the application.

Following the completion of testing by internal teams, the product can be sent for beta testing. At this point, the application must be assumed to be able to manage a high volume of traffic, especially if the beta testing audience is open.

The practicalities involved in both closed and open beta testing can require intensive planning. Closed beta testing is where access to the application is provided to a restricted group of users that have been selected and defined, perhaps through a submission and approval process. Open beta testing means anyone interested can use the software in its unreleased form, which brings the advantage of obtaining feedback from a wide and varied group of testers.

Example: A new integration for use with a third-party localization tool is ready for launch following months of development. To beta test the integration, 100 volunteer users have signed up. As early users, they will be testing the integration and providing feedback on usability and reliability issues.

Exploratory Testing

Exploratory testing has minimal structure or guidelines. Instead of following a set script for each test case, the tester is free to follow their initiative and curiosity where they “explore” and learn about the application while conducting tests on the fly.

Exploratory testing is a form of ad-hoc testing that can be used during the entire development and testing phase at times when the team feels it is required. Because of the lack of formality involved, it is often performed by those other than testers such as designers, product managers, or developers.

Example: A new feature is close to being released, and the support team conducts exploratory testing to discover if all scenarios have been anticipated in the test cases. Exploratory testing gives them the opportunity to identify any critical bugs or usability issues that had been missed earlier.

Negative Testing

Negative testing verifies how an application responds to the input of purposely invalid inputs. Negative testing can be conducted during various stages throughout the development and testing phases, but once error handling and exceptions have been introduced. This type of testing is typically done by the QA team or engineers and often involves working alongside copywriters to ensure proper messaging is included for each exception.

Example: To log in to a website, we would generally expect to enter a user name and a password in two data fields. Negative testing seeks to find out what happens when the enter button is deliberately pressed after only one field has been filled.

Usability Testing

Usability testing is the most psychologically engaging of the manual testing types because it concerns how a user feels when engaging with your product. This type of testing assesses the user-friendliness of your application by observing the behavior and emotional reaction of the user. Are they confused or frustrated? Does your product allow them to achieve their aims with minimal steps? Feedback and learnings can then be used to improve the user experience.

Usability testing can take place during any phase of the development process, so specific features, or an entire application depending on the size, can be checked and assessed.

When administering usability testing, engage genuine users of the application who have not been involved with its production to get real-world feedback, which you can use to improve the application.

Example: You’re developing a new game for an e-learning platform, and you want to test the user experience of starting, playing, and ending a game. Can they quickly locate what to press and when? Do they feel satisfied with the experience?

Conclusion

With so many manual testing types available to software testing teams, getting a new product as close as possible to the designer’s expectation is realistic. When planning a testing project, consider the requirements, then select the right combination of manual testing types to get the job done, and remember you will not need to employ them all.

About the writer

Jake Bartlett

Jake Bartlett lives and works in Nashville, Tennessee. He has a background in software testing, customer support, and project management.

All Jake Bartlett's articles